|

8/22/2023 0 Comments Nvidia gpu computing toolkit Let's just hope they don't argue with tech journalists. Overall, it's reasonable to say that thanks to continued hardware advancements from vendors like Nvidia and Cerebras, high-end cloud AI models will likely continue to become more capable over time, processing more data and doing it much faster than before. Nvidia did not announce pricing for the GH200, but according to Anandtech, a single DGX GH200 computer is "easily going to cost somewhere in the low 8 digits." The default CUDA Toolkit install locations searched are: C:Program FilesNVIDIA GPU Computing ToolkitCUDAvX.Y. The DGX GH200 will be capable of training giant next-generation AI models (GPT-6, anyone?) for generative language applications, recommender systems, and data analytics. If exactly one candidate is found, this is used. Notably, Nvidia also announced that it will be building this combo CPU/GPU chip into a new supercomputer called the DGX GH200, which can utilize the combined power of 256 GH200 chips to perform as a single GPU, providing 1 exaflop of performance and 144 terabytes of shared memory, nearly 500 times more memory than the previous-generation Nvidia DGX A100. Advertisementįurther Reading Hungry for AI? New supercomputer contains 16 dinner-plate-size chips Nvidia expects the combination to dramatically accelerate AI and machine-learning applications in both training (creating a model) and inference (running it). The GH200 takes that "Hopper" foundation and combines it with Nvidia's "Grace" CPU platform (both named after computer pioneer Grace Hopper), rolling it into one chip through Nvidia's NVLink chip-to-chip (C2C) interconnect technology. It powers AI models like OpenAI's ChatGPT, and it marked a significant upgrade over 2020's A100 chip, which powered the first round of training runs for many of the news-making generative AI chatbots and image generators we're talking about today.įaster GPUs roughly translate into more powerful generative AI models because they can run more matrix multiplications in parallel (and do it faster), which is necessary for today's artificial neural networks to function. We've previously covered the Nvidia H100 Hopper chip, which is currently Nvidia's most powerful data center GPU. I built successfully the project on other machines, with the same versions of visual studio and nvcc.Further Reading Nvidia’s powerful H100 GPU will ship in October I tried different combinations of Nvidia CUDA Toolkit (10.0, 10.1) and Microsoft Visual Studio (2017 - MSVC 14.16, 2019 - MSVC 14.20).

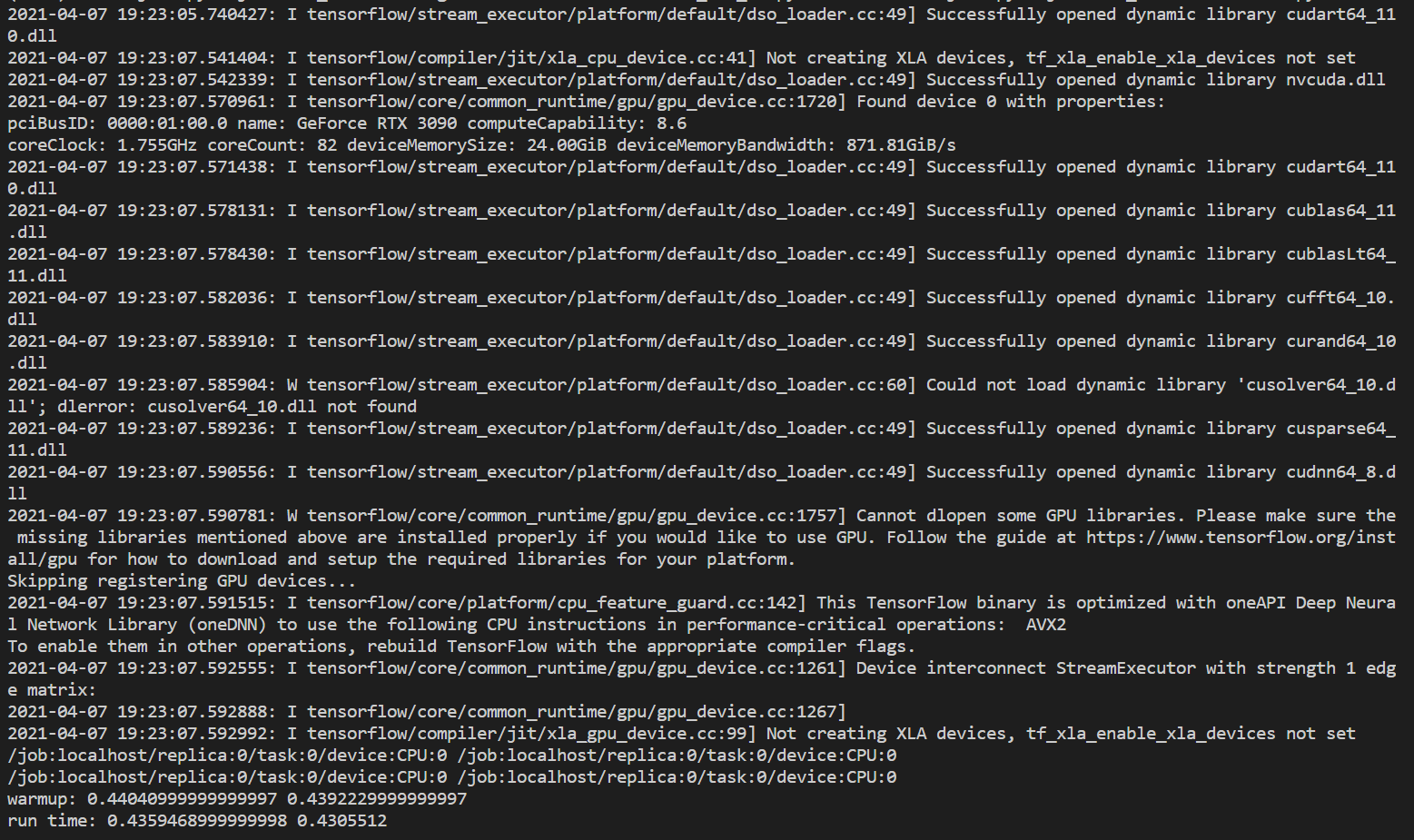

C:/Program Files (x86)/Microsoft Visual Studio/2019/Community/Common7/IDE/CommonExtensions/Microsoft/CMake/CMake/share/cmake-3.13/Modules/CMakeTestCUDACompiler.cmake 46 You can also download previous versions from the Archive of Previous. Nvcc fatal : Could not set up the environment for Microsoft Visual Studio using 'C:/Program Files (x86)/Microsoft Visual Studio/2019/Community/VC/Tools/MSVC/8/bin/HostX64/圆4/./././././././VC/Auxiliary/Build/vcvars64.bat'ĬMake will not be able to correctly generate this project. STEP 1) Download and install CUDA Toolkit to download the latest CUDA Toolkit. Building CUDA object CMakeFiles\cmTC_d4aa6.dir\main.cu.objįAILED: CMakeFiles/cmTC_d4aa6.dir/main.cu.objĬmd.exe /C "C:\PROGRA~1\NVIDIA~2\CUDA\v10.1\bin\nvcc.exe -x cu -c main.cu -o CMakeFiles\cmTC_d4aa6.dir\main.cu.obj

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed